DeepSec by Vercel: The New Security Layer for AI-Coded Apps

Vercel just released DeepSec, an open-source AI-powered security scanner for large codebases

Vercel Labs released DeepSec last week.

Open-source, Apache 2.0, free.

CLI tool. Runs locally with npx deepsec. Plugs into existing Claude or GPT subscriptions through AI Gateway or direct keys.

Tested internally for months on Vercel monorepos and production codebases like dub.co.

Works with Claude Opus 4.7 at max effort and GPT 5.5 at high reasoning. No cyber-tuned models needed.

How the scan runs

Five stages.

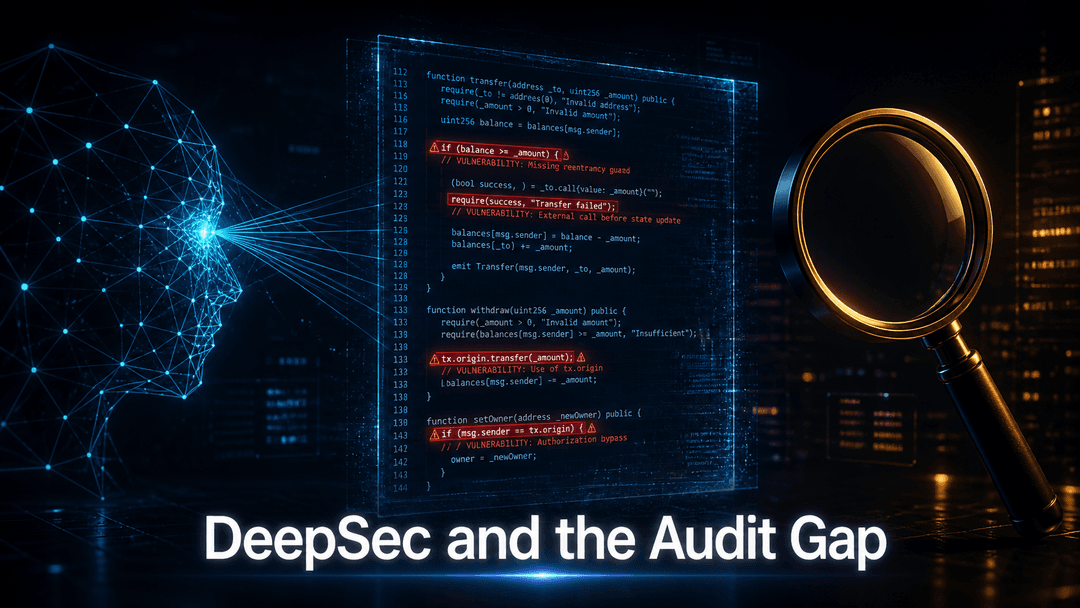

- Scan. Regex-based static analysis flags security-sensitive files.

- Investigate. AI agents trace data flows, check mitigations, and generate findings with severity ratings.

- Revalidate. A second agent pass verifies findings to cut false positives.

- Enrich. Git metadata and contributor info get attached to each finding.

- Export. Results ship as markdown or JSON for tickets or follow-up.

Fan-out option. Up to 1,000 concurrent Vercel Sandboxes for parallel execution on massive repos.

Scans are idempotent. Interrupted runs resume from where they stopped.

Plugin system. Custom regex matchers tuned to your codebase, including auth patterns and data layers. You can prompt your coding agent to generate better matchers after an initial run.

Where it shines and where it falls short

Strong fit. Large production monorepos with auth, database, and backend logic. Teams with engineers who can interpret findings, write custom matchers, and triage results.

False positive rate. 10 to 20 percent even after the revalidate step. Every critical finding still needs human review.

Cost. Thousands of dollars per scan on very large codebases. Heavy agent usage is the cost driver.

Coverage. Strong on web apps and services out of the box. Pure libraries and frameworks need custom plugins.

What automated agent scans still miss:

- Business logic flaws that depend on knowing the app's purpose.

- Authorization edge cases tied to user state and role transitions.

- Prompt injection paths through MCP connectors and AI agent boundaries.

- Race conditions in async flows that surface only under specific conditions.

These need a human testing the running app, not a static scan.

What this means for vibe-coded apps

Most apps shipping today are not Vercel-scale monorepos. Small teams. AI coding agents. Fast deploys. Faster iteration.

The risk profile is different:

- Dependencies run deeper than the team typically audits.

- Code is generated, often not fully understood by the people shipping it.

- The AI surface area itself becomes part of the threat model.

DeepSec was built for mature engineering orgs running large repos. The output is most useful when an engineering team can act on it.

For AI-generated apps and agent deployments, layer manual review on top. Someone reading the code the way an attacker would. Testing the running app. Probing prompt injection paths and connector scopes. Finding what looks safe in static analysis but breaks in production.

If you have a large codebase, run DeepSec. The findings are actionable and the tool is free.

If you are shipping AI-assisted apps with a small team, automated scanning alone leaves real gaps. Manual black-box auditing catches what it misses.