MCP Security for AI Agents: The Hidden Risk in Agent Config Files

AI agent configs can reveal access to tools, databases, and internal systems. MCP honeypots add a detection layer when attackers probe connected services.

AI agents are no longer just helping developers write code. They are also getting connected to real business systems like GitHub, databases, browsers, deployment tools, internal dashboards, and sometimes systems that are very close to production.

This access is often managed through MCP, which stands for Model Context Protocol. You can think of MCP as a connector system that lets an AI agent talk to other tools. That is useful, but it also means the file that stores those connections becomes sensitive.

Most teams are not treating that file like a security risk yet.

The Risk Inside AI Agent Configs

AI agents use configuration files to remember which tools and services they can connect to. Files like .mcp.json and ~/.claude.json may show whether the agent is connected to GitHub, Slack, Notion, Supabase, production databases, deployment tools, internal APIs, or secret managers.

For a developer, this may look like normal setup information. For an attacker who gets access to a developer’s laptop, it can become a shortcut to understanding the company’s systems.

It tells them what exists, what the AI agent can access, and where they should look next.

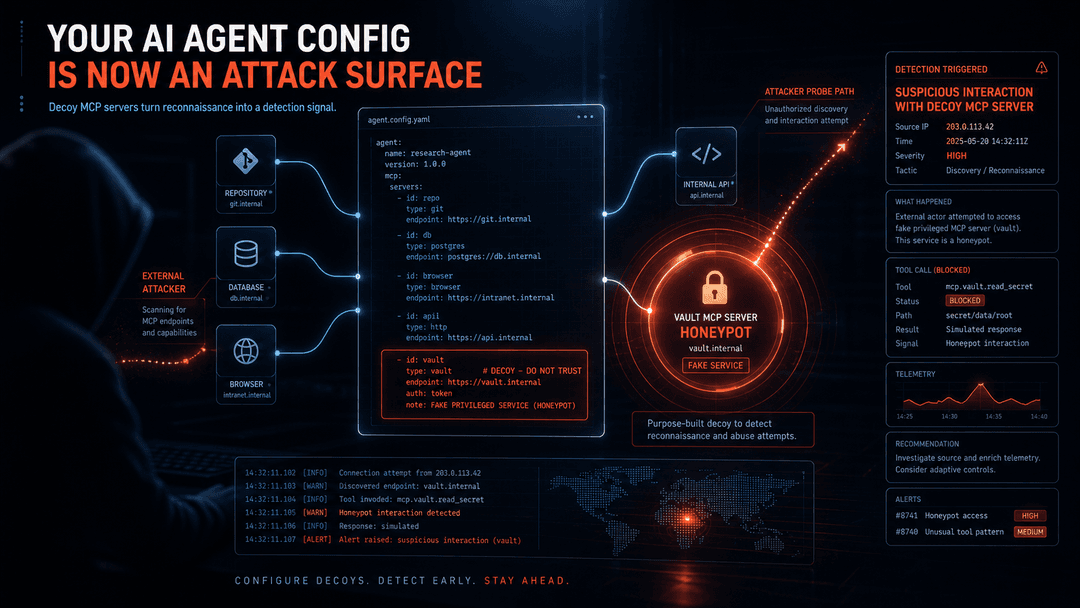

Security expert Lenny Zeltser shared a clever way to detect this behavior. His idea is to add a fake MCP server to the agent config, give it a tempting name like vault, and make it look like an internal system that stores sensitive information.

The fake server can show fake actions like secrets_vault_read or production_db_query. There is no real vault and no real production database behind it. It is only there to catch suspicious activity.

If someone tries to view or use that fake system, the honeypot logs what happened and sends an alert.

Why This Matters For AI Built Apps

Attackers usually do not attack blindly. They first look around, read files, check what services are connected, search for credentials, and try to understand how the system is built.

A fake MCP server turns that snooping into a warning signal. If someone only checks what actions are available, that may show early exploration. If they try to use something like secrets_vault_read, that is a much stronger sign that they are looking for sensitive data.

This matters even more for vibe-coded apps. A founder may build quickly with Claude Code, Cursor, or another AI coding agent. The app works, login is connected, payments are working, the database is storing data, and deployment is live.

But the AI agent setup behind the app may never get reviewed.

Nobody checks what systems the AI agent can reach. Nobody checks whether it can touch customer data or production systems. Nobody checks whether risky actions need approval, or whether anyone will be alerted if something suspicious happens.

That is how a product can look ready from the outside while the AI layer around it is still unsafe.

What Teams Should Check

An AI agent security audit should start with a simple question: what can the agent access?

Teams should review every connected service and understand what each one allows the agent to do. If the agent can read customer data, query a production database, change files, run commands, deploy code, trigger payments, or call internal systems, that access should be treated carefully.

Risky actions should require approval. Important actions should be logged. Configuration files should not reveal more internal information than necessary.

Fake systems, also called honeypots, can add another layer of protection. They do not replace proper security, but they help catch the moment someone starts poking around the AI agent setup.

The visible app matters, but the AI layer around it matters too: what the agent can access, which services are connected, which actions are allowed, and whether there are safe boundaries in place.

MCP makes AI agents more useful.

It also makes their setup more sensitive.

If your AI agent can reach important systems, the file that describes that access is part of your attack surface.