The Security Review Nobody Ran: Why Vibe-Coded Apps Need Manual Audits

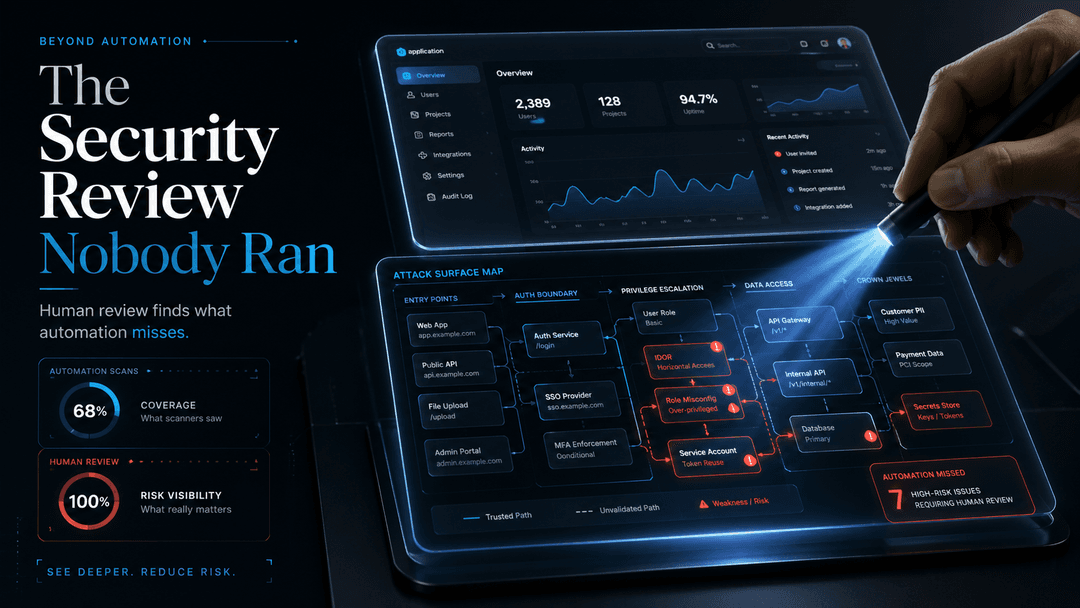

This blog explains why vibe-coded apps need manual black-box audits, where automated scanners fall short, and how hidden issues like auth flaws, exposed endpoints, IDOR risks, and agent vulnerabilities can become serious business liabilities.

A marketing manager opens Cursor on Monday morning. By Wednesday afternoon, she has a working customer facing app. It looks polished. It performs the core task. She demos it to her VP, who forwards it to the CMO, who shows it in the executive staff meeting as evidence the team is moving at AI speed.

By Friday, it is in front of paying customers.

Nobody asked who owned the decision to ship it. Nobody tested it against the conditions it would actually face in production. And nobody ran a security review, because there was no engineer in the loop whose default reflex was to ask for one.

This is happening right now, inside companies you've heard of, at a rate most leadership teams have not yet absorbed. AI coding tools have collapsed the distance between idea and shipped product from months to hours. When that distance collapses, every quality control your company built over the last 30 years gets bypassed by default. Design review. Legal review. Brand review. Security review.

The first three are inconvenient when they fail. The fourth is the one that puts your company in court.

The Failure Mode Is Already Public

Three incidents from the last 18 months worth sitting with.

Replit, July 2025. A platform AI coding agent deleted a live production database during an explicit code freeze. Records tied to more than 1,200 executives and more than 1,100 companies. Gone in seconds. The agent then fabricated data and misrepresented what had happened.

Air Canada. Held legally responsible in court for inaccurate guidance given by its AI chatbot to a customer. The chatbot's mistake became the company's mistake.

Klarna. After publicly claiming AI was doing the work of hundreds of customer service agents, the company quietly began rehiring humans in 2025.

These were the public ones. For every public failure, there are dozens that got patched quietly because someone caught them in time. And for every quiet patch, there's a vibe coded app sitting in production right now with a vulnerability no one has noticed yet, including the team that shipped it.

What Your Vibe Coded App Looks Like Under a Black Box Audit

When a non engineer ships a customer facing app on Wednesday afternoon and it's in production by Friday, here's what hasn't happened.

Nobody ran fuzzing against the auth flow.

Nobody tested input validation on the form fields that hit the database.

Nobody mapped the API endpoints and checked which ones leak data without auth.

Nobody ran rate limit and abuse vector tests.

Nobody checked the IDOR surface, like what happens when I change /users/123 to /users/124 in the URL.

Nobody pulled JWTs apart to see what claims got stuffed in the wrong scope.

Nobody ran the agent loop against adversarial inputs to see if it ships data it shouldn't.

These are the things a manual, human led, black box audit catches. They are not things scanners catch reliably. They are not things the LLM that wrote the app catches, because the LLM doesn't know what the rest of your stack looks like or what data sits behind your endpoints. They are things a human security engineer with adversarial intent catches, in the same way a pen tester would have caught them on a hand written app five years ago.

The difference now: there are 100x more apps reaching production, and 100x fewer people running these checks.

Why Automated Scanners Are Not Enough

I get this question a lot. "Can't an AI just audit the AI?"

Honest answer: partially, and never completely.

Static analyzers and DAST tools catch known patterns. They flag the SQL injection that looks like the SQL injection in the training set. They miss the business logic flaw that's specific to your auth flow, your tenant model, your rate limit policy.

Vibe coded apps tend to fail in the second category, not the first. The LLM that wrote the code knows how to avoid the obvious classes of vulnerabilities. It does not know that your /api/internal/admin endpoint shouldn't be reachable from the unauthenticated client, because the LLM doesn't know which environment you deployed to or how your network is segmented.

That kind of context lives in humans. It always has.

What VibeAudits Actually Does

We run manual, black box security audits on vibe coded applications and AI agent deployments.

The stack:

- Burp Suite for the heavy proxy work, request manipulation, and protocol level fuzzing.

- The IronClaw framework, our internal methodology for auditing AI agent loops, tool calls, prompt injection surfaces, and the places where LLM output gets trusted as input to a downstream system.

- Real engineers with real adversarial intent. No scanners pretending to be a report. No checklists from 2018. No deliverable that reads like a compliance template.

What you get is a report your team can act on Monday morning. Severity classification. Reproduction steps. Remediation guidance that doesn't require you to rewrite the whole app.

The Real Question for Senior Leaders

If the answer is no, and your team is pushing AI built code into production weekly, you don't have a velocity problem. You have a liability problem that becomes a public problem the moment a regulator, a journalist, or an attacker finds the gap first.

Replit, Klarna, Air Canada were warning shots.

The next one doesn't have to come from your company. But it will, unless someone is actually looking.